There is plenty of misconception about the term “duplicate content”. For some reason, it has become the buzzword of the century. Frequently, you will read posts on forums that say there can be no duplicate anywhere on the internet. Logic tells us this is incorrect, since newspapers have been publishing the same articles for years. Likewise, syndication would be dead if this rumor was true. But every time someone starts a conversation about writing content for various article directories, someone else chimes in right away about how no two websites can use the exact same words or paragraphs. Contrarily, what is more accurate is that duplicate content on the same website is taboo.

Everyone agrees that search engines have built-in mechanisms to identify duplicate content. But the idea that most do not grasp is why you should avoid duplicate content on your site? Today, it is increasingly difficult to avoid duplicate content because it does not happen intentionally for many site owners. Typically, it happens because blog themes are poorly designed in relation to how content is distributed. In this article, however, we are going to discuss specifically why you should avoid duplicate content on your site. In the next article, we will show you ways to prevent duplicate content from appearing on your WP site.

Everyone agrees that search engines have built-in mechanisms to identify duplicate content. But the idea that most do not grasp is why you should avoid duplicate content on your site? Today, it is increasingly difficult to avoid duplicate content because it does not happen intentionally for many site owners. Typically, it happens because blog themes are poorly designed in relation to how content is distributed. In this article, however, we are going to discuss specifically why you should avoid duplicate content on your site. In the next article, we will show you ways to prevent duplicate content from appearing on your WP site.

Spam – Duplicate content is most commonly seen as spamming. When webmasters realized that they could “play or dupe” the search engines with thousands and thousands of pages through a technique called “mod_rewrite”, they just spammed the internet with the same content. They used it as a way to monopolize the top ranking positions, thus ensuring people came to their websites. Obviously, there were no user benefits in the pages. There was no substance or value for visitors. Now search engines are continually looking for duplicate content in order to stamp out spamming.

Poor Earnings or Commissions – Along the same lines as spamming, webmasters found that the more pages they had, the more money they could make from the same content. Of course, their ROI was high because they never had to develop the sites. And when pay-per-view commissions were the rage with companies paying webmasters every time a banner was seen, duplicate content was the norm. Nowadays, webmasters would not even be accepted into any network with excessive duplicate content.

Angy Users – If you own a hobby site that is important to you, or you earn your living from one website or many, duplicate content is not going to make your visitors very happy. If they have to read the same thing over and over on different pages, they will eventually give up and leave your site permanently. This defeats the purpose of owing a website, if you have no readers.

Banned or Filtered by Search Engines – Another “technique” that webmasters tried was duplicate websites. Once they hit on a successful topic or niche, they just made clones. The only thing that changed was the domain name and sometimes the color or graphics. They might have even housed the sites on different servers, but the search engines read text, so the sites were flagged. Typically sites that are clones of others or sites that engage in excessive duplicate content will invariably be removed or filtered from the search engine results. Nowadays, they might not even make it to the results pages.

As you have probably now surmised, duplicate content on the same website is a serious issue. It is one that should not be taken lightly when building websites and using themes that perpetuate problems. Understanding why you should avoid duplicate content is the key to fixing the problem and preventing duplicate content from happening.

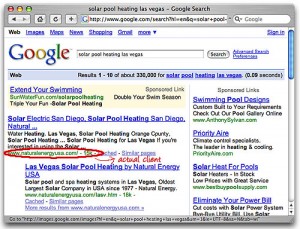

The second is making sure the website that links back has the same theme as your website. This will then make the websites more relevant than other websites in the same search. Back links are important to SEO because as you build up back links, you are boosting up your website and have a greater chance of getting indexed faster. Not only does it help with the short term, but in the long run, it also helps to increase the traffic to your site.

The second is making sure the website that links back has the same theme as your website. This will then make the websites more relevant than other websites in the same search. Back links are important to SEO because as you build up back links, you are boosting up your website and have a greater chance of getting indexed faster. Not only does it help with the short term, but in the long run, it also helps to increase the traffic to your site. hat your web host will not negatively affect your ranking.

hat your web host will not negatively affect your ranking.